Sohu, a major Chinese site, recently published a tech review discussing their first impressions from Intel Core Ultra 5 245K and Intel Core Ultra 9 285K white box testing. In the article, they included screenshots of the WebXPRT 4 test results they produced during their evaluation. The screenshots showed that the testers had enabled WebXPRT 4’s Simplified Chinese UI. They’re not the first to use this option, and it’s one we are glad worked for them.

Though WebXPRT’s language settings menu has proven to be a popular feature for many users around the world, some folks may not even know the option is there. In today’s blog, we’ll go over the basics of this simple but helpful testing option.

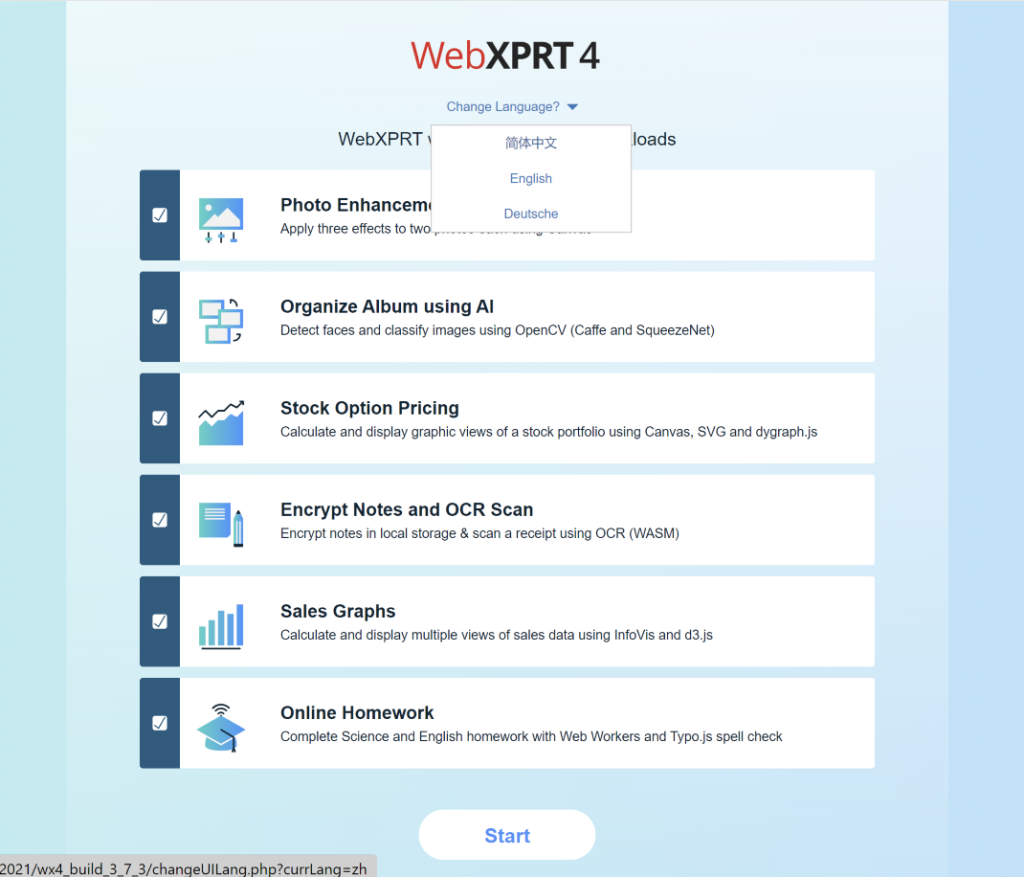

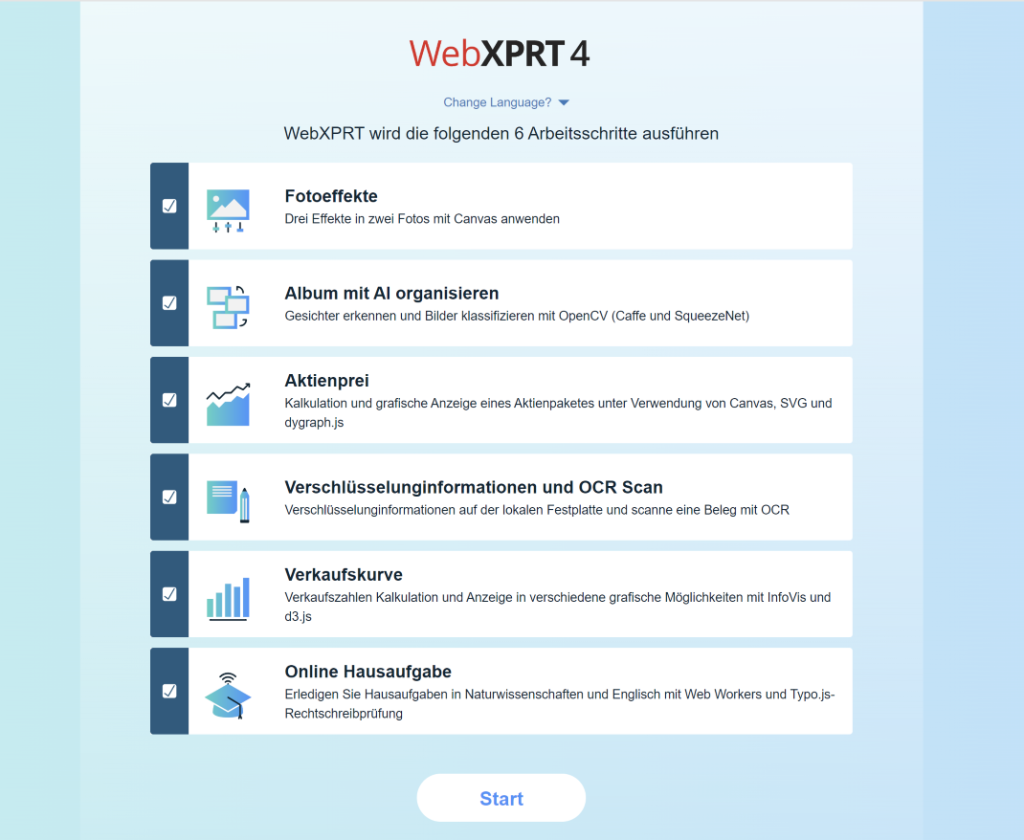

On WebXPRT’s Start screen, you can choose from three language options in the WebXPRT 4 UI: Simplified Chinese, German, and English. We included Simplified Chinese and German because of the large number of tests we see from China and Central Europe. We wanted to make testing a little easier for users who prefer those languages and we’re glad to see people using the options.

Changing languages in the WebXPRT UI is quick and easy. Locate the “Change Language?” prompt under the WebXPRT 4 logo at the top of the Start screen, and click or tap the arrow beside it. After the drop-down menu appears, select the language you want. The language of the start screen will then change to the language you selected, and the in-test workload headers and end-of-test results screen will also appear in the language you selected.

Figures 1–3 below my sig show the “Change Language?” drop-down menu and how the Start screen appears when you select Simplified Chinese or German. It’s important to note that if you have a translation extension installed in your browser, it may override the WebXPRT UI by reverting the language back to your browser’s default. You can avoid this conflict by temporarily disabling the browser’s translation extension for the duration of WebXPRT testing.

We hope WebXPRT 4’s language options will help facilitate the testing process for many users around the world. If you’re a frequent WebXPRT user and would like to see us add support for another language, please contact us. And, of course, if you have any questions about WebXPRT 4 testing, please let us know!

Justin