AIXPRT Community Preview 2 (CP2) has been generating quite a bit of interest among the BenchmarkXPRT Development Community and members of the tech press. We’re excited that the tool has piqued curiosity and that folks are recognizing its value for technical analysis. When talking with folks about test setup and configuration, we keep hearing the same questions:

- How do I find the exact toolkit or package that I need?

- How do I find the instructions for a specific toolkit?

- What test configuration variables are most important for producing consistent, relevant results?

- How do I know which values to choose when configuring options such as iterations, concurrent instances, and batch size?

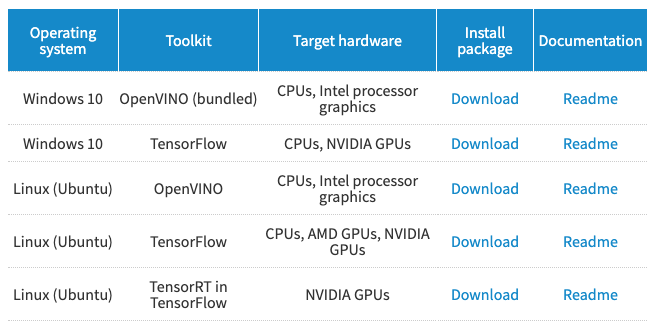

In the coming weeks, we’ll be working to provide detailed answers to questions about test configuration. In response to the confusion about finding specific packages and instructions, we’ve redesigned the CP2 download page to make it easier for you to find what you need. Below, we show a snapshot from the new CP2 download table. Instead of having to download the entire CP2 package that includes the OpenVINO, TensorFlow, and TensorRT in TensorFlow test packages, you can now download one package at a time. In the Documentation column, we’ve posted package-specific instructions, so you won’t have to wade through the entire installation guide to find the instructions you need.

We hope these changes make it easier for people to experiment with AIXPRT. As always, please feel free to contact us with any questions or comments you may have.

Justin