Last month, we gave readers a glimpse of the updates in store for the next WebXPRT, and now we have more news to report on that front.

The new version of WebXPRT will be called WebXPRT 3. WebXPRT 3 will retain the convenient features that made WebXPRT 2013 and WebXPRT 2015 our most popular tools, with more than 200,000 combined runs to date. We’ve added new elements, including AI, to a few of the workloads, but the test will still run in 15 minutes or less in most browsers and produce the same easy-to-understand results that help compare browsing performance across a wide variety of devices.

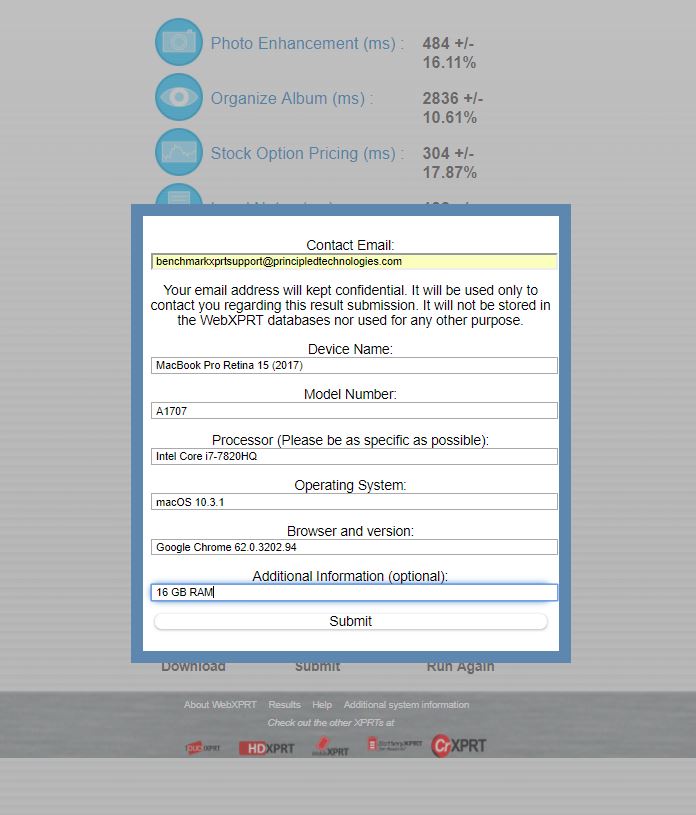

We’re also very close to publishing the WebXPRT 3 Community Preview. For those unfamiliar with our open development community model, BenchmarkXPRT Development Community members have the ability to preview and test new benchmark tools before we release them to the general public. Community previews are a great way for members to evaluate new XPRTs and send us feedback. If you’re interested in joining, you can register here.

In BatteryXPRT news, we recently started to see unusual battery life estimates and high variance when running battery life tests at the default length of 5.25 hours. We think this may be due to changes in how new OS versions are reporting battery life on certain devices, but we’re in the process of extensive testing to learn more. In the meantime, we recommend that BatteryXPRT users adjust the test run time to allow for a full rundown.

Do you have questions or comments about WebXPRT or BatteryXPRT? Let us know!

Justin