Back in November, we discussed some of the trends we were seeing in the total number of completed and reported WebXPRT runs each month. The monthly run totals were increasing at a rate we hadn’t seen before. We’re happy to report that the upward trend has continued and even accelerated through the first quarter of this year! So far in 2024, we’ve averaged 43,744 WebXPRT runs per month, and our run total for the month of March alone (48,791) was more than twice the average monthly run total for 2023 (24,280).

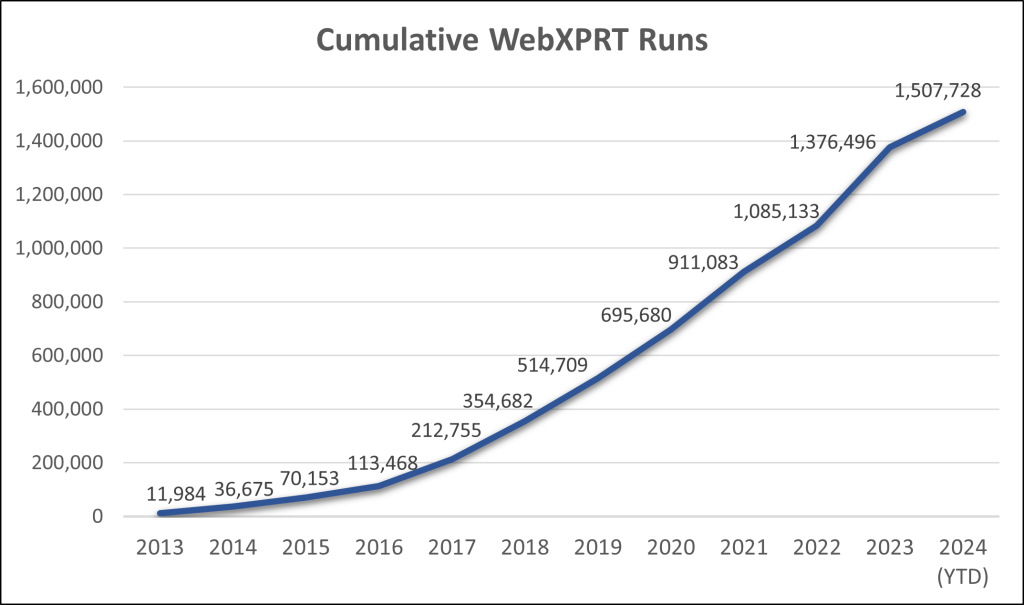

The rapid increase in WebXPRT testing has helped us reach the milestone of 1.5 million runs much sooner than we anticipated. As the chart below shows, it took about six years for WebXPRT to log the first half-million runs and nine years to pass the million-run milestone. It’s only taken about one-and-a-half years to add another half-million.

This milestone means more to us than just reaching some large number. For a benchmark to be successful, it should ideally have widespread confidence and support from the benchmarking community, including manufacturers, OEM labs, the tech press, and other end users. When the number of yearly WebXPRT runs consistently increases, it’s a sign to us that the benchmark is serving as a valuable and trusted performance evaluation tool for more people around the world.

As always, we’re grateful for everyone who has helped us reach this milestone. If you have any questions or comments about using WebXPRT to test your gear, please let us know! And, if you have suggestions for how we can improve the benchmark, please share them. We want to keep making it better and better for you!

Justin